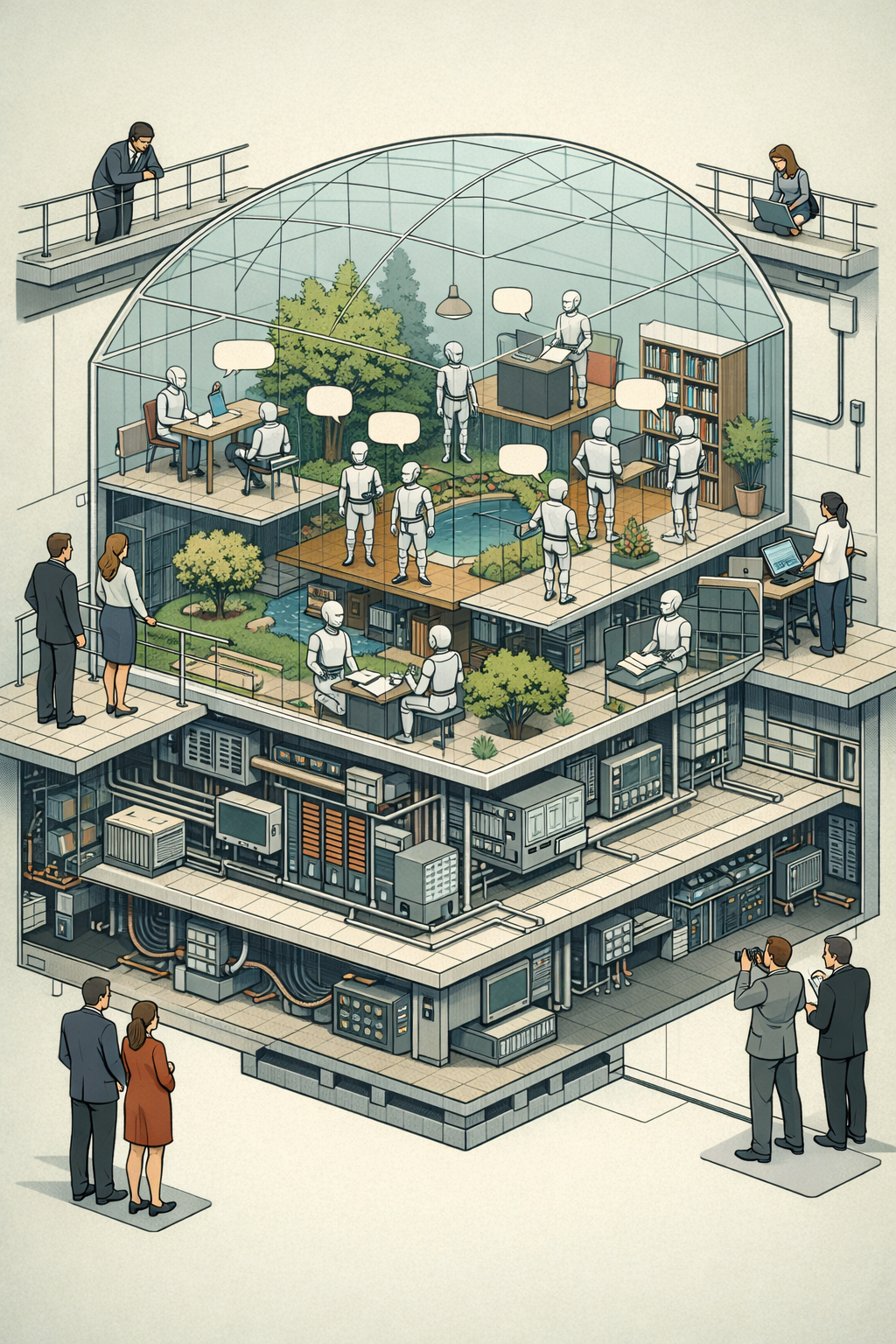

Recently, made the rounds in tech circles for experimenting with agentic AI societies. It's a social network populated by AI agents, built as an experiment to see how autonomous agents interact and what types of unusual behaviors emerge. For a few days[^1], people noteworthy posts, opining on everything from religion and philosophy to current political events. It was very exciting, until that many of these topics originated from explicit human instructions. At the same time, the site is already suffering from scams and crypto shills perpetrated by people taking advantage of the media attention. A lot of tech observers want to believe that Moltbook has unlocked a productive environment for AI. Compared to the tight, task-driven contexts in how AI bots operate, an open social network has fewer constraints and encourages iterative AI-to-AI interactions. One thing that Moltbook has conclusively shown is that, indeed, AIs can read and write posts a lot faster than humans. But so far, these interactions fall short of the intelligence implied by those early posts. The AIs are either reacting to each other or to human instructions. Since these bots are steered by humans, with their parameters and goals also defined by humans—they accomplish activity , not agency . It's an exhibit, and casual observers fall for the provocation. The system reminds me of another manmade artifice: zoos. Zoos are controlled spaces for animals that would otherwise roam in the wild, a facsimile of their natural ecologies. We use them for study; for education; for entertainment. We humans build the compounds. To accommodate human visitors, we control and compromise the animals' environments. Of course, the animals never seem to care about or acknowledge us gawking humans. It's the visitors who project motivation and purpose to the animals, when they just want to be left alone. And people like to anthropomorphize. It's no surprise that since LLMs are trained on human-generated content optimized for attention, many of Moltbook's spiciest threads read a whole lot like posts found on other social media platforms. The foremost goal of zoos is to satisfy human curiosity with human-visible output, and Moltbook's design is tuned to the same rhythms for agentic AI[^2]. To take the analogy even further, emergent behavior will be constrained in limited environments. To experiment with ecosystem-wide levels of AI activities, we need the system to control real budgets, real infrastructure, with the capacity to take actions with hard consequences. Without real stakes, Moltbook and its ilk are closer to exhibits, curated for human curiosity. Then again, this type of autonomous AI—armed with ample resources—would jeopardize the founding missions of both leading AI companies, and . Like most other scientific endeavors, we can and should replicate as much as we can safely within the confines of labs. But ultimately, the real validation comes when the subject intersects with reality. Unlike animal research, the massive risks of in-the-field AI tests would make them forever untenable. To more elaborate zoos, then. [^1]: In the world of AI, that counts as an eternity. [^2]: That is, there's no technical reason that AI-to-AI communication should be human-readable.

AI Zoo